View From The Bench: How Preamps Quietly Make Big Gains, Part 1

Mic preamps might look straightforward, but Andy Szikla uncovers the many trade-offs of designing a ‘wire with gain’.

In one of his books about lateral thinking, Edward de Bono talks about a bunch of designers sitting around a big table trying to re-invent the airplane. One guy wants to put in more seats so they can make more money per flight. Another wants less seats to make it easier for the peanut cart to get down the middle. One wants bigger fuel tanks so they can fly further. Another wants smaller fuel tanks so they can fit more baggage in. On it goes around the table as paradigm challenges paradigm, and conflicts are argued about and eventually resolved. Everyone there knows they all have the same goal, and that is to make a great airplane. However, they are also aware that at the end of their deliberations, if the thing won’t fly, they’ve all failed.

Modern microphone amplifiers are to me very much like that, and a good design will be a harmonisation of different and sometimes contradictory concerns. A good microphone amplifier is not just a box that makes things louder. It has to have adjustment available to cater for a variety of audio levels at its input and output, reject external electromagnetic interference and unwanted signals, make its input moonlight as a DC power supply for microphones, exhibit low self noise, and offer high reliability. When you design a microphone amplifier, you are designing a whole system which is searching for its own equilibrium. Changing the parameters of any one section will often create an argument with its neighbour.

I can’t think of another piece of audio equipment which appears so straightforward and simple, yet hides complex interactions between its various sections.

ALL MICS SOUND DIFFERENT, SO WHICH ONE’S RIGHT?

A fully stocked recording studio will have a cornucopia of different microphones on hand. Why? Because they all sound different. So which one’s right? All of them. They all provide different flavours and colourations, which is the upbeat way of saying they all deliver sonic distortion. At its bare bones a microphone is a device which converts one form of energy — sound waves — into another form of energy — electricity. As different microphones represent different physical mechanisms for that conversion, it is understandable that results will vary between them. What we do in the studio is choose a mic which will sound best with the source we are recording; like when my Mrs Tech Bench chooses a deadly pair of shoes to complement her frock. The best studios also have the nicest sounding rooms. Do we care about sonic accuracy? Not really. Otherwise most records would be made in an anechoic chamber using a B&K reference mic, and Stephen Hawking would do the lead vocal.

This brings up a much debated point. When designing or selecting a microphone amplifier for use, what importance should be placed on pristine reproduction, free of colouration, as one might expect from the design of a Hi-Fi amplifier? I think the answer to that question is, given the choice between the clean and the colourful, an individual user will choose what is right for their recording. There is nothing wrong with taking the sound of a microphone and preserving it, or changing it, or anything in between. It gives the design team a bit of room to move, but at the end of their deliberations, if the thing doesn’t sound good — they’ve all failed.

ETCHED INTO HISTORY

The first recording equipment was not electronic, but entirely mechanical, and it was enough of an achievement just to have captured a sound and stored it.

In 1877, Thomas Edison invented a gizmo he called the Phonograph. It worked a bit like when you stretch a string between two tin cans and you can hear your cousin. The Phonograph allowed you to yell into a funnel, and the sheer force of your personality would cause a needle to cut a groove into a revolving tinfoil- or wax-coated cylinder. You could play it back via the same needle, using a hand-crank to rotate the cylinder, and the funnel would then serve as a horn, to amplify the sound back to the listener.

Initially there was no way of duplicating the cylinders, and if you wanted a hundred copies the artist would have to perform the work a hundred times. It took until 1901 to pull off making reproductions from a mould of the first cylinder, but it was a cumbersome and expensive process, and the recordings were only two minutes long anyway. Those difficulties aside, for fifty cents each, you could choose between marches, sentimental ballads, hymns, comic monologues, or a racist form of early ragtime known as coon songs.

Meanwhile, Emil Berliner of Washington DC had come up with a flat disc version of Edison’s idea which he called the Gramophone, which possessed an important advantage. After the needle scratched away the wax, you could acid-etch into the zinc plate underneath to easily create a reproduction master with at least three minute’s worth of material on it — more if you used a larger disc. This was technically the beginning of the record industry as we know it, but whether you were a banjo player or a symphony orchestra, you still had to shout down that funnel.

Enter Alexander Graham Bell. Back in 1877 when Edison was inventing the Phonograph (also making him the world’s first audio engineer), Bell was inventing the Telephone — a revolution in communications which would change the world as dramatically as the internet did again a hundred years later. Like Gates and Zuckerberg in powdered wigs, Bell and Edison were riding at the helm of a new era.

A year later Edison created the Carbon Microphone, which was arguably the first serious piece of pro audio kit ever. It consisted of carbon granules squished between two metal plates. Sound waves striking the plates varied the pressure on the granules, which changed the electrical resistance between them. Carbon microphones were a great leap forward. They were not high-fidelity devices, so their dominance in professional audio was limited, but their inherent simplicity and durability made them a standard component in telephones for the next hundred years.

Edison’s carbon microphones were also ingeniously reconfigured as the first electronic amplifiers. [1] Instead of using sound waves, a mechanical plunger was pressed against the carbon granules and, similar to a loudspeaker, varied its pressure magnetically in response to an audio source. The resulting signal that emerged on the wire could be as much as 100 times (40dB) louder than the original. These carbon amplifiers were used extensively in the new telephone networks, to boost diminishing signals in long-distance cables.

In the years that followed, it was all about the telephone, and most of the important advances in electronic audio and amplification were made by people working for two subsidiaries of AT&T, the company founded by Bell. Western Electric and Bell Laboratories variously developed the vacuum tube, the first condenser microphone, some of the first valve amplifiers, and even invented the decibel (named after Bell) and negative feedback, but it took a while for these advances to find their way back into the record industry.

The oldest known electronic recording is a document of the burial of the Unknown Soldier in Westminster Abbey, London, in 1920. The equipment used was a high output carbon microphone connected directly to a record cutting lathe. The result is noisy, fuzzy and indistinct, and like everything else, you can find a copy of it on YouTube. It also marks a starting point in the appearance of the first electronic recording systems, which were all ‘direct to disc’ and quickly saw the old mechanical technology wiped out of existence.

By 1925, Western Electric’s ‘Westrex’ electrical recording system had established itself as the market leader, and did away with carbon microphones in favour of the sonically superior condenser mics. Class A valve amplifiers then boosted the weaker signal up to cutting lathe level, for the production of a wax master. These kinds of systems were commissioned into purpose-built studios all around the world, including the brand new Abbey Road facility in London (at that time, the largest recording studio in the world), and so the stage was set for the beginning of the musical recording era as we know it.

Over the next 20 years the design of better sounding and higher fidelity microphones and amplifiers was to follow, with new researchers like EMI Hayes Laboratories setting many benchmarks, and manufacturers like Neumann producing classic equipment that is still hallowed today. Still, direct to disc recording remained the standard until 1948 when Ampex, 3M and Bing Crosby’s money finally developed magnetic recording tape into a viable alternative.

NO GAIN NO PLAY’N

The principal and most tangible requirement of a microphone amplifier is to apply gain in the audio band of frequencies; lots of gain, and useful amounts tend to range between 40 and 70dB. In voltage terms, that is an increase in amplitude of up to 3000 times, which is quite a lot.

The oldest systems accomplished this amplification using a combination of input transformer to class A valve circuitry, and that was the way it stayed until the invention of transistors. The transformers were wound in such a way that a small amount of gain was produced across the windings, and then output to the valve amp which would provide whatever additional gain was required. Various other schemes have been used over the years, and I have listed some common ones below, with examples of devices that have made use of those topologies.

- Transformer to class A valve (Bill Putnam’s original UA100D and UA610 control panels)

- Transformer to class A transistor (Neve 1073 preamp)

- Transformer to op amp (Chilton CM series Broadcast desks)

- Transformerless differential transistor input (Soundcraft 400B desk)

- Transformerless instrumentation amp IC (Klark Teknik Midas XL200 desks)

Interesting, at least to me, is that right from the get-go all of the above methods employed a ‘differential input’ as a way of suppressing unwanted external noise — and noise is the second most important matter a microphone amplifier must face.

TAKE A LONG LINE…

Because a microphone cable is made from a long bit of wire it works pretty well as an antenna, and will receive all manner of emissions from radio broadcasts, digital TV, light switches sparking and the guy next door operating his hand drill. Then on top of all that, you have your mains AC voltage humming along at 50 or 60Hz (depending where you live). If all that junk infects our mic amp and gets amplified 3000 times as well, then we may as well call off the session and spend the night drinking. Differential inputs are our main defence against these troublesome unwanted signals, and a typical audio transformer can attenuate them by 100dB or more.

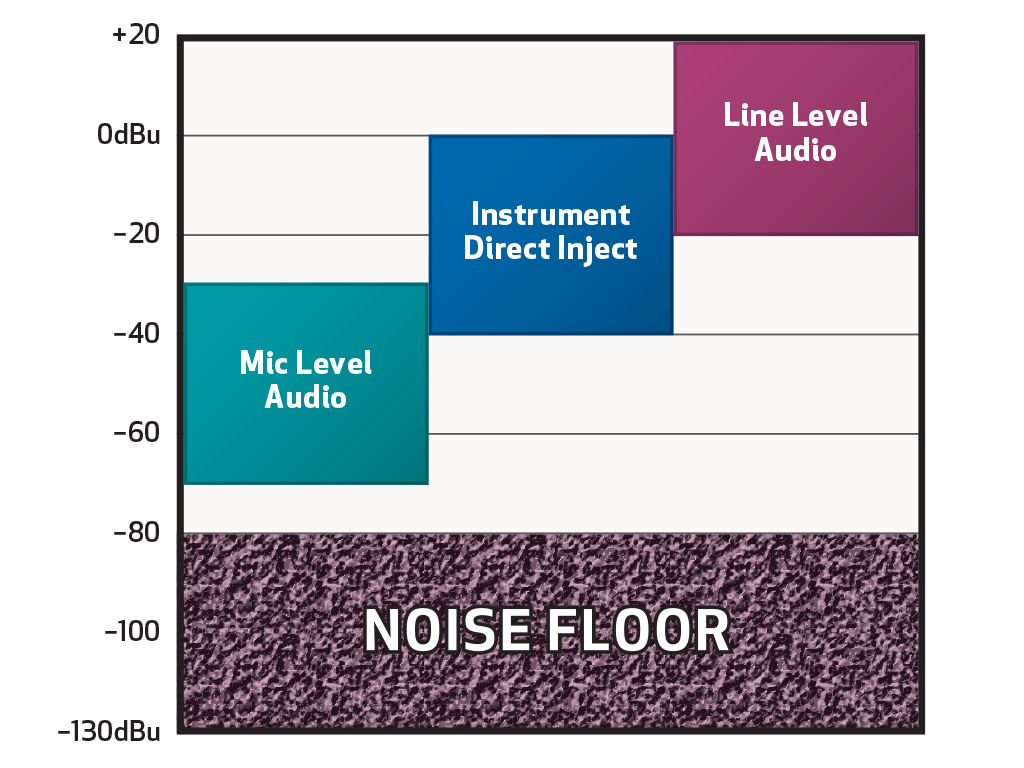

In addition to our external woes, the components themselves produce noise, so every system has it, and you can hear it as hiss when you turn up the volume. For frequencies under 10Hz, semiconductors will produce a kind of crackle, like the slow grinding of metal on stone, as random charge carriers within the silicon combine or break apart. In a mic amp where the designers haven’t troubled themselves about noise, there can easily be something lurking in the background with an amplitude well into the hundreds of microvolts. We call that the ‘noise floor’ and what we want is for our audio signal to be as far above it as possible. That is one of the reasons why most of the downstream gear you are likely to use is designed to operate with signals of around one volt or so (professional line level signals of +4dBu correspond to an amplitude of 1.228 volts). Processing our wanted audio at line levels in the region of 80dB above the noise floor means it is far easier to keep it clear of all the unwanted crap, internal and external. [2] A superior mic amp will have a very low noise floor, but in our inferior system a delicate ribbon microphone might only be in the clear by 20dB or less, and when we turn it up will sound like our artist is playing a tune in the back yard, while watering the garden.

This helps explain why you should avoid plugging a line level output into mic level input. Every time you apply gain to a signal, you are also amplifying noise. [3] If you have audio that is a happy distance from the noise floor, then it is not a good idea to turn it down, just so you can amplify it up again and bring the noise floor with it. It’s much wiser to connect line outputs to line inputs at the same level (see diagram 3).

We no longer live in the era when it was good enough simply to achieve a result, and now when designers set out to develop a microphone amplifier that performs better than the average, noise management is a major subject that needs to be considered from the very first sketches. There is a subtle trade-off between noise and gain, and a more palpable one between noise and bandwidth. It can sometimes play out that several low-gain stages cascaded or added together might produce the same overall amplification with a lower noise figure than one single high-gain stage. But will that muck up the input impedance seen by the microphone? Or make our external noise rejection less effective? And how much frequency bandwidth do we want? Do we restrict it in order to lower noise, especially under 10Hz, or will that upset our many customers doing whale music? Or should we give them a switch that makes our amplifier operate down to DC?

So finally, here we are at the big table where one argument starts to affect another, and hopefully the designer will find that process satisfying, and feel energised as they look deep into all the challenges for a unifying solution. Failing that, they might look deep into a beer.

In the second part of this article, I will explain how differential inputs go about their important work of attenuating noise, introduce several other mic amp essentials, and talk about the design process, and the pursuit of what some people refer to as the ghost in the machine: that elusive quality of sounding ‘good’.

Nicely written pair of articles. Like the jokey tone. Check out my two at audioxpress mag getting published soon. Take care Andy. Best. Mike